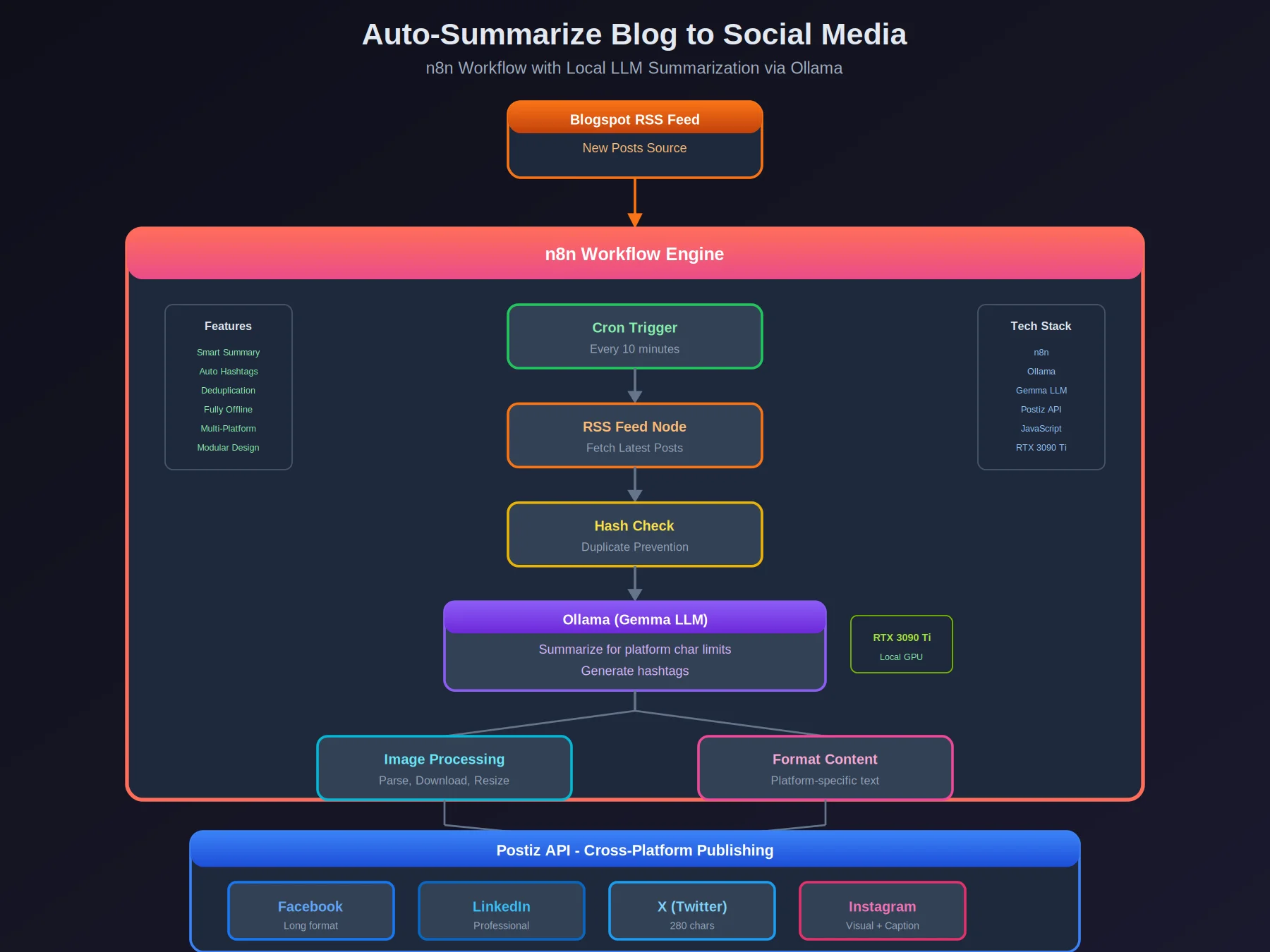

An n8n workflow that watches my blog RSS feed, summarizes new posts with a local LLM (Gemma on Ollama), and cross-posts to Facebook, LinkedIn, X, and Instagram. I write the post once; the workflow handles the rest.

Overview

| Aspect | Details |

|---|---|

| Platform | Self-hosted n8n |

| LLM | Gemma via Ollama (local GPU) |

| Social APIs | Postiz |

| Scheduling | Cron trigger (10 min) |

| Hardware | RTX 3090 Ti for inference |

How It Works

- Fetch - RSS feed polling for new blog posts

- Summarize - Ollama (Gemma) generates platform-specific summaries

- Extract - Parse HTML for images, download and resize

- Generate - AI creates relevant hashtags

- Publish - Postiz API posts to all platforms

- Dedupe - Hash-based tracking prevents duplicates

Architecture

┌─────────────────────────────────────────────────────────────────┐

│ Hugo RSS Feed │

└───────────────────────────┬─────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ n8n Workflow │

│ ┌──────────────────────────────────────────────────────────┐ │

│ │ Cron Trigger (10 min) │ │

│ └──────────────────────────┬───────────────────────────────┘ │

│ │ │

│ ┌──────────────────────────▼───────────────────────────────┐ │

│ │ RSS Feed Node (Fetch Latest) │ │

│ └──────────────────────────┬───────────────────────────────┘ │

│ │ │

│ ┌──────────────────────────▼───────────────────────────────┐ │

│ │ Hash Check (Duplicate Prevention) │ │

│ └──────────────────────────┬───────────────────────────────┘ │

│ │ │

│ ┌──────────────────────────▼───────────────────────────────┐ │

│ │ Ollama Node (Gemma LLM) │ │

│ │ • Summarize (platform char limits) │ │

│ │ • Generate hashtags │ │

│ └──────────────────────────┬───────────────────────────────┘ │

│ │ │

│ ┌──────────────────────────▼───────────────────────────────┐ │

│ │ Image Extraction & Processing │ │

│ │ • HTML parsing │ │

│ │ • Download & resize │ │

│ └──────────────────────────┬───────────────────────────────┘ │

│ │ │

│ ┌──────────────────────────▼───────────────────────────────┐ │

│ │ Postiz API │ │

│ │ ┌─────────┐ ┌─────────┐ ┌─────────┐ ┌─────────┐ │ │

│ │ │Facebook │ │LinkedIn │ │ X │ │Instagram│ │ │

│ │ └─────────┘ └─────────┘ └─────────┘ └─────────┘ │ │

│ └──────────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────────┘

What Was Harder Than Expected

Getting the LLM to respect character limits was the recurring headache. X needs 280 characters, LinkedIn gets more room. The prompt engineering for consistent output length took more iteration than the actual workflow logic.

Postiz’s API has quirks — Instagram JSON handling in particular required workarounds that aren’t documented. The LLM output parsing needed to be fault-tolerant because Gemma occasionally returns slightly different JSON structures.

Duplicate prevention uses a simple link hash stored in a file. It works. The whole thing runs on the local RTX 3090 Ti — no API calls to OpenAI or anyone else.

Tech Stack

| Component | Technology |

|---|---|

| Automation | Self-hosted n8n with community nodes |

| AI | Gemma via Ollama |

| Social API | Postiz |

| Content | RSS feed parsing |

| Images | HTML parsing, HTTP download, resize |

| Deduplication | JavaScript hash with file tracking |

| Scheduling | Cron trigger |

The workflow is available as a template on n8n.io for anyone who wants to set up something similar.

Thanks to Grok (xAI) for help with debugging and optimization